Grounded in peer-reviewed literature. Structured. Ready.

The data layer between scientific literature and your model. Starting with soil GHG.

Built on

The infrastructure underneath.

Solum sits between scientific literature and your work — built like the systems your engineers would build if they had the time. Schemas, validators, versioned snapshots, and an API your team can actually integrate with.

What Solum offers

solum-torch PyTorch adapter

Semantic search

JSON Schema · 70+ entity types

3-validator QA pipeline

Versioned snapshots

Shareable report links

One-click PDF export

DuckDB + REST API

4 controlled vocabularies

Search and filter 1000s of treatments by management practice, GHG type, soil texture, region — directly in the browser.

Click any value and see the exact figure or table it was extracted from — source PDF rendered alongside.

solum-torch · ML adapter

Drop-in PyTorch Dataset with covariates joined at query time. pip install and train.

Why you need Solum

Four cases. Same payoff.

"Audit on Friday. Every number has to defend itself."

Provenance down to the source figure and page. Every value chains paper → study → treatment → observation → source figure or table. The API serves the figure raster on demand. Citation bundles export the full trail.

"Pulling an effect-size table for cover crops × N₂O across Midwest sites."

Treatment-level resolution across study designs and observation methods — field experiments, incubations, remote sensing, modeling intercomparisons. Filter by practice, GHG, soil texture, region, design, and method. The exact subset you need in minutes.

"Building a DNDC calibration set. The data has to match the model."

Calibration sets queryable by model system — DNDC, SALUS, ecosys — with CMIP5 climate and NASA POWER weather covariates joined at query time from spatial raster tiles.

"Training a neural network. The data has to be real, not 30 hand-curated papers."

pip install solum-torch. Drop-in PyTorch Dataset class — covariates joined at query time, observation sequences pre-batched. Real experimental data, ready to train.

An asymmetric advantage.

Three roles. The same quiet edge.

Some teams are working from spreadsheets and memory. Some are working from a structured, peer-reviewed evidence layer with full provenance. The difference shows up in deadlines, audits, and bid pipelines.

For

The Researcher / Consultant

It's Friday. You've been staring at 47 PDFs since Tuesday. Your director needs the report by EOD.

Before

Three weeks of paper-by-paper extraction. Numbers you can't fully defend if anyone pushes back.

After

Done by Friday. Every number cited. Every claim defended.

What you tell your director

"I used a structured database of 1000s of peer-reviewed studies. Every number traces back to the exact figure or table it came from — paper, study, site, and source location. If anyone audits us, the citation bundle is one click away."

For

The Modeler / ML Engineer

Your DNDC calibration is overdue, or you're writing data scrapers instead of model code. Either way, data is the bottleneck.

Before

Calibration takes longer than the model run. Training sets built from 30 hand-curated papers. Provenance lives in a spreadsheet built from memory.

After

Pull a calibration set or training corpus in an afternoon. Real experimental data, joined with covariates, ready for the run or the loop.

What you tell the room

"Our model — process-based or neural — runs on the most comprehensive structured experimental dataset in the field. Every observation traces to a named paper, figure, and table. Competitors are working from synthetic data and spreadsheets."

For

The Practice Lead

Q3 review tomorrow. Your VP wants to know why utilization is at 60% and why the bid pipeline isn't bigger.

Before

Half of every project disappears into background research. Margins thin, headcount maxed, growth stalled.

After

Three more projects this quarter without adding headcount. Every project ships with a defensible literature foundation, in hours.

What you tell your VP

"We bought infrastructure. Solum gives every project a peer-reviewed evidence layer in hours instead of weeks. Utilization is up, margin recovered itself, and we doubled the pipeline."

Where Solum is going

Four stops on one road.

The data layer is the foundation. Three more destinations turn it into something a researcher, a model, or another AI can actually use. Each stop below is tagged honestly — what's shipping, what's committed, what we're exploring.

The Data Layer

The foundation. Peer-reviewed papers, structured at the treatment level, with full provenance.

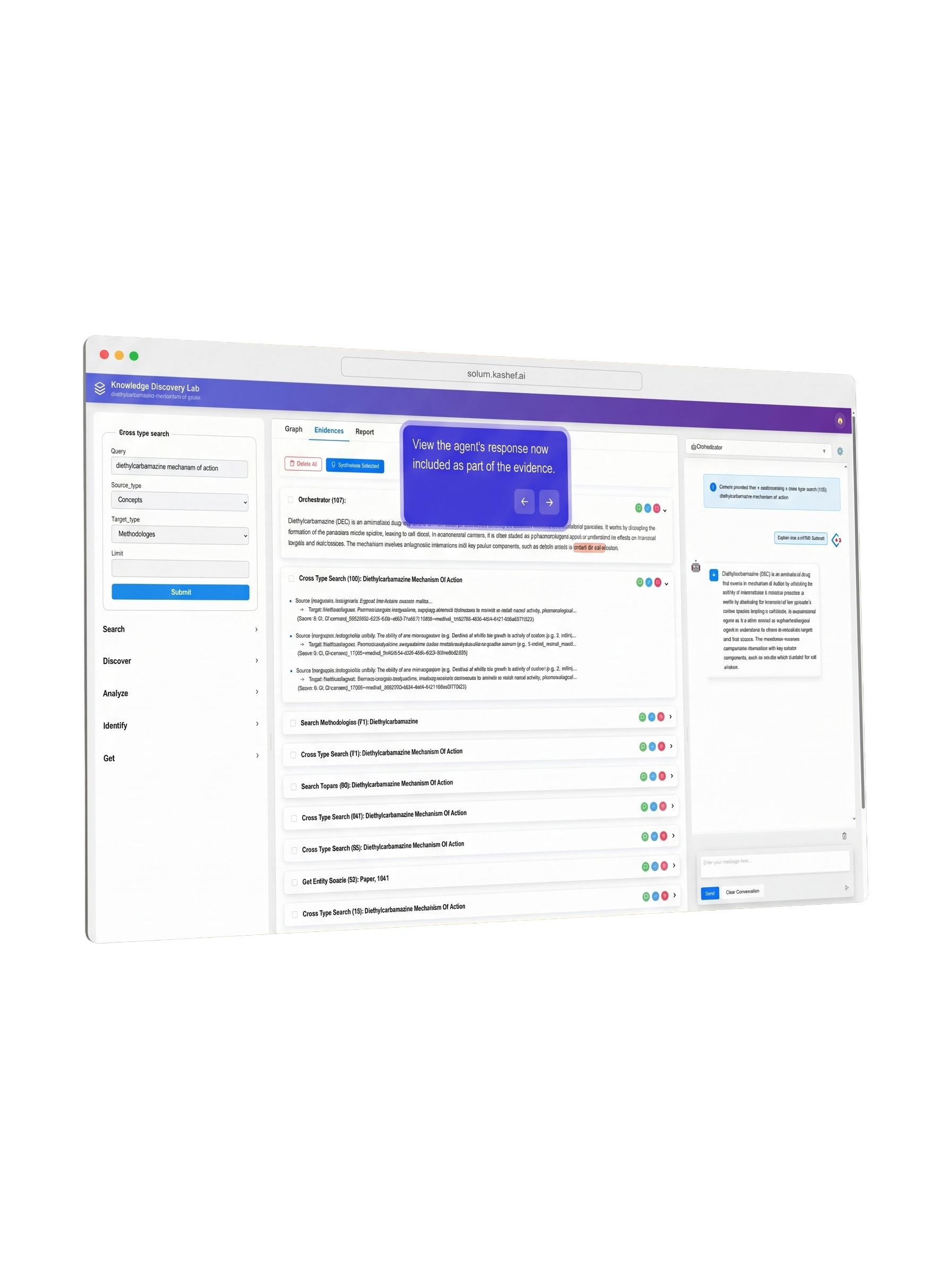

Solum Intelligence

A domain-aware analysis copilot. Ask in natural language — Solum runs the query, drafts the memo, cites the source.

Bring Your Own AI

Your agent. Your stack. Solum exposed as a first-class tool to whatever AI your team already uses.

pip install solum-torch on PyPI

Models as a Service

A single composite prediction — process modeling, neural networks, and remote-sensing data fused through data assimilation. Not a one-off calibrated run; a continuously updated estimate. Available in the UI, via API, or to your AI agent.

What's on this list moves forward when there's pull, not push. If a milestone matters to your team, tell us — we ship for customers, not for slides.

Pick the door that matches how you work.

Tier 1

Explorer

Researchers & students

Free

Forever

- · 50-paper UI sample

- · Search & filter

- · CSV export

Custom

Enterprise

MRV providers, carbon-tech, ML groups

Let's talk

Pricing tailored to your team

- · Full database + REST API

- · Audit-ready provenance bundles

- ·

solum-torch& custom extraction - · Dedicated support

The auditor question

"Where did your calibration

data come from?"

A spreadsheet built from memory is not an answer that survives an audit. A database of 1000s of peer-reviewed studies with full provenance is. Solum exports a citation bundle for every calibration set — every data point traces back to a named paper, a named study, a named site.

Methodology paper to be published in Scientific Data (Nature portfolio) later this year.